CSIT/Design Optimizations

WARNING - DRAFT UNDER REVIEW - WORK IN PROGRESS

Contents

- 1 Motivation

- 2 Proposed CSIT Design Optimizations

- 3 Proposed CSIT Robot Framework Keywords Optimisations

- 4 CSIT System Design Hierarchy

- 5 Test Lifecycle Abstraction

- 6 RF Keywords Functional Classification

- 7 RF Keywords Naming Guidelines

- 8 Test Suite Naming Guidelines

- 9 Patch Review Checklist

- 10 (to be) Updated CSIT Directory Structure

- 11 Applying CSIT Design Guidelines

WARNING - DRAFT UNDER REVIEW - WORK IN PROGRESS

Motivation

FD.io CSIT system design needs to meet continuously expanding requirements of FD.io projects including VPP, related sub-systems (e.g. plugin applications, DPDK drivers) and FD.io applications (e.g. DPDK applications), as well as growing number of compute platforms running those applications. With CSIT project scope and charter including both FD.io continuous testing AND performance trending/comparisons, those evolving requirements further amplify the need for CSIT framework modularity, flexibility and usability.

Following analysis of the existing CSIT design and related inventory of CSIT Robot Framework KeyWords and libraries, CSIT project team decided to make a number of CSIT design optimizations to address those evolving requirements.

This note describes the proposed CSIT design optimizations, and positions them in the context of the updated CSIT framework design. It also includes the updated Robot Framework (RF) Keyword (KW) Naming Style Guidelines to ensure readability and usability of CSIT test framework, and to address existing CSIT KW naming inconsistencies.

Proposed CSIT Design Optimizations

Proposed CSIT design optimizations focus on updated CSIT design guidelines for Robot Framework Test and Keywords usage and can be summarized as follows:

- Strictly follow the CSIT Design Hierarchy described in this document;

- Tests MUST use only L2 RF Keywords (KWs);

- All L2 KWs are composed of L1 KWs (either RF or Python) and/or other L2 KWs;

- Python L1 KWs are preferred to RF L1 KWs;

- RF L1 KWs should be reduced to minimum;

- Test and KW naming should be following CSIT naming guidelines;

For more detailed description of how these guidelines apply to developing new CSIT Tests and Keywords see section "Applying CSIT Design Guidelines".

Proposed CSIT Robot Framework Keywords Optimisations

- RF KW renaming

- see naming guidelines in this document;

- EF KW re-classification

- breakdown libraries into multiple files

- mainly performance.robot, as everything is in one file;

- group KWs per infra and functional area similar to structure of csit rls reports;

- breakdown libraries into multiple files

- RF KW move levels

- few L2 KWs should be L1 KWs, as never used in tests, and dealing with lower level abstractions;

- EF KW refactoring;

- merge KWs that are always used together;

- KWs that are used 1-2 times should be merged to parent KW, unless further re-use expected;

- for TestSetup and TestTeardown - use one L2 KW only;

- SuiteSetup and SuiteTeardown already follow this;

- unused KW removal

- remove unused and unwanted RF KWs;

- apart from the ones prepared for future use of course;

- update CSIT framework documentation

- use content of this document;

- see CSIT wiki link

- Getting started with CSIT development;

- https://wiki.fd.io/view/CSIT/Documentation;

CSIT System Design Hierarchy

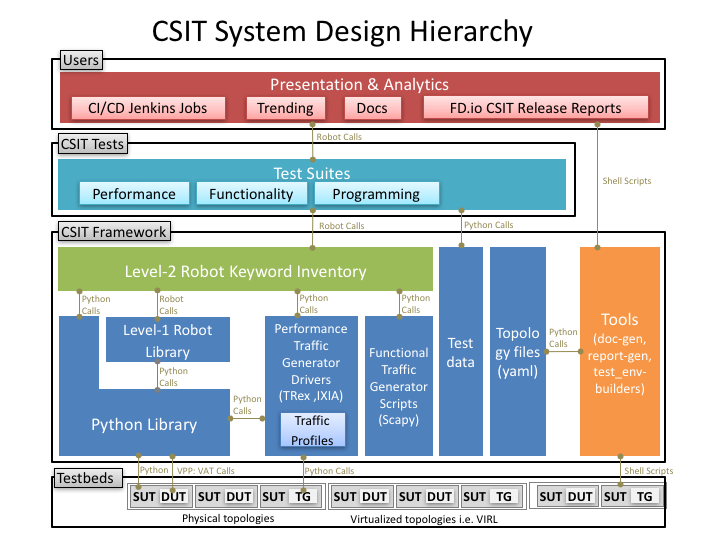

CSIT follows a hierarchical system design with SUTs and DUTs at the bottom level, and presentation level at the top level, with a number of functional layers in-between. The current CSIT design including CSIT framework is depicted in the diagram below.

A brief bottom-up description is provided here:

- SUTs, DUTs, TGs:

- SUTs - Systems Under Test;

- DUTs - Devices Under Test;

- TGs - Traffic Generators;

- Level-1 libraries - Robot and Python:

- lowest level CSIT libraries abstracting underlying test environment, SUT, DUT and TG specifics;

- used commonly across multiple L2 KWs;

- performance and functional tests:

- L1 KWs (KeyWords) are implemented as RF libraries and Python libraries;

- proposal is to stop implementing L1 functions with RF KWs and do them in Python only instead

- honeycomb - good example, L1 all in .py, L2 all in RF KWs;

- over time convert perf and func L1 RF KWs to Python;

- performance TG L1 KWs:

- all L1 KWs are implemented as Python libraries;

- support for TRex only today;

- need to add IXIA;

- performance data plane traffic profiles:

- TG-specific stream profiles provide full control of:

- packet definition – layers, MACs, IPs, ports, combinations thereof e.g. IPs and UDP ports;

- stream definitions - different streams can run together, delayed, one after each other;

- stream profiles are independent of CSIT framework and can be used in any T-rex setup, can be sent anywhere to repeat tests with exactly the same setup;

- easily extensible – one can create a new stream profile that meets tests requirements;

- same stream profile can be used for different tests with the same traffic needs;

- TG-specific stream profiles provide full control of:

- functional data plane traffic scripts:

- Scapy specific traffic scripts;

- Level-2 libraries - Robot resource files:

- higher level CSIT libraries abstracting required functions for executing tests;

- L2 KWs are classified into the following functional categories:

- configuration, test, verification, state report;

- suite setup, suite teardown;

- test setup, test teardown;

- Tests - Robot:

- Test suites with test cases;

- Functional tests using VIRL environment:

- VPP;

- HoneyComb;

- Performance tests using physical testbed environment:

- VPP;

- Testpmd;

- Tools:

- documentation generator;

- report generator;

- testbed environment setup ansible playbooks;

- operational debugging scripts;

Test Lifecycle Abstraction

A well coded test must follow a disciplined abstraction of the test lifecycles that includes setup, configuration, test and verification. In addition to improve test execution efficiency, the commmon aspects of test setup and configuration shared across multiple test cases should be done only once. Translating these high-level guidelines into the Robot Framework one arrives to definition of a well coded RF tests for FD.io CSIT.

Anatomy of Good Tests for CSIT:

- Suite Setup - Suite startup Configuration common to all Test Cases in suite: uses Configuration KWs, Verification KWs, StateReport KWs;

- Test Setup - Test startup Configuration common to multiple Test Cases: uses Configuration KWs, StateReport KWs;

- Test Case - uses L2 KWs with RF Gherkin style:

- prefixed with {Given} - Verification of Test setup, reading state: uses Configuration KWs, Verification KWs, StateReport KWs;

- prefixed with {When} - Test execution: Configuration KWs, Test KWs;

- prefixed with {Then} - Verification of Test execution, reading state: uses Verification KWs, StateReport KWs;

- Test Teardown - post Test teardown with Configuration cleanup and Verification common to multiple Test Cases - uses: Configuration KWs, Verification KWs, StateReport KWs;

- Suite Teardown - Suite post-test Configuration cleanup: uses Configuration KWs, Verification KWs, StateReport KWs;

RF Keywords Functional Classification

CSIT RF KWs are classified into the functional categories matching the test lifecycle events described earlier. All CSIT RF L2 and L1 KWs have been grouped into the following functional categories:

- Configuration;

- Test;

- Verification;

- StateReport;

- SuiteSetup;

- TestSetup;

- SuiteTeardown;

- TestTeardown;

All existing CSIT L1 and L2 RF KWs have been classified into one of above categories in the spreadsheet that is accompanying this document [mk][add reference to link/xls filename]. Going forward all coded RF Keywords will be tagged using functional categories listed above. To improve readability and usability of CSIT RF KWs, a simple naming convention is proposed for each of the KW categories identified above. Hence while coding RF KWs it is important to keep in mind the category of the coded KW.

RF Keywords Naming Guidelines

Readability counts: "..code is read much more often than it is written." Hence following a good and consistent grammar practice is important when writing RF KeyWords and Tests.

All CSIT test cases are coded using Gherkin style and include only L2 KWs references. L2 KWs are coded using simple style and include L2 KWs, L1 KWs, and L1 python references. To improve readability, the proposal is to use the same grammar for both RF KW styles, and to formalize the grammar of English sentences used for naming the RF KWs.

RF KWs names are short sentences expressing functional description of the command. They must follow English sentence grammar in one of the following forms:

- Imperative - verb-object(s): "Do something", verb in base form.

- Declarative - subject–verb–object(s): "Subject does something", verb in a third-person singular present tense form.

- Affirmative - modal_verb-verb-object(s): "Subject should be something", "Object should exist", verb in base form.

- Negative - modal_verb-Not-verb-object(s): "Subject should not be something", "Object should not exist", verb in base form.

Passsive form MUST NOT be used. However a usage of past participle as an adjective is okay. See usage examples.

Following sections list applicability of the above grammar forms to different RF KW categories. Usage examples are provided, both good and bad.