VPP/HostStack

Contents

Description

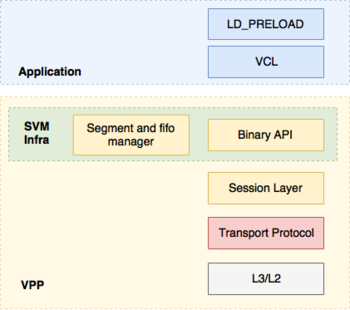

VPP's host stack is a user space implementation of a number of transport, session and application layer protocols that leverages VPP's existing protocol stack. It roughly consists of four major components:

- Session Layer that accepts pluggable transport protocols

- Shared memory mechanisms for pushing data between VPP and applications

- Transport protocol implementations (e.g. TCP, QUIC, TLS, UDP)

- Comms Library (VCL) and LD_PRELOAD Library

Documentation

Set Up Dev Environment - Explains how to set up a VPP development environment and the requirements for using the build tools

Session Layer - Goes over the main features of the session layer

VCL - Description and configuration

TLS Application - Describes the TLS Application Layer protocol implementation

Host Stack Test Framework - Architecture description of host stack's test framework

Getting Started

Applications can link against the following APIs for host-stack service:

- Builtin C API. It can only be used by applications hosted within VPP

- "Raw" session layer API. It does not offer any support for async communication

- VCL API that offers a POSIX-like interface. It comes with its own epoll implementation.

- POSIX API through LD_PRELOAD

A number of test applications can be used to exercise these APIs. For many of the examples in the tutorials section below, it is assumed that two VPP instances have been brought up and properly configured to ensure networking connectivity between them. To test that network connectivity is available, the builtin ping tool can be used. As a convention, we consider the first vpp instance (vpp1) to be the one the server is attached to and the second instance (vpp2) to be the one where the client application is attached. For illustrative purposes all examples use TCP as a transport protocol but other available protocols could be used.

One of the simplest ways to test that the host stack is running would be to use the builtin echo applications. On vpp1, from the cli do:

# test echo server uri tcp://vpp1_ip/port

and on vpp2:

# test echo client uri tcp://vpp1_ip/port

For more details on how to further configure the client/server apps to do throughput and CPS testing see here and for more examples check the tutorials section.

Tutorials/Test Apps

Test Builtin Echo Client/Server Apps

Test External Echo Client/Server Apps

Test VCL Echo Client/Server Apps

Tests

Make test VCL tests source code

Unit tests for tcp, session layer and svm infra CSIT trending

Running List of Presentations

- DPDK Summit North America 2017

- FD.io Mini Summit KubeCon 2017

- FD.io Mini Summit KubeCon Europe 2018

- FD.io Mini Summit KubeCon NA 2018 slides and video

- FD.io Mini Summit KubeCon EU 2019 slides and video

- EnvoyCon 2020 slides and video

- EnvoyCon 2024 video